My Rox Engineering Spring Internship

My Rox Engineering Spring Internship

During my Spring internship at Rox, I owned several core platform and product systems, designing and building them end-to-end. Barriers between full-time employees and interns were non-existent and I experienced a very high degree of trust and ownership. I was lucky to work on several very different projects, as will be discussed below, continuing to refine my engineering skillset across a wide variety of domains. This post dives into the systems I built and the technical challenges behind them.

Data Columns Optimization & Unified Warehouse Abstraction (Platform Work)

The Rox knowledge graph leverages Spark as a warehouse agnostic compute layer, connecting to any one of our supported warehouses (Snowflake, Databricks, BigQuery, etc.) and performing large data transformations to compute the knowledge graph structure. Every 30 minutes, we build a new version of the Rox knowledge graph for every single Rox customer using Spark jobs. This gives excellent performance, while also making our system flexible to support the myriad of systems that enterprise customers use in their businesses.

Migrating Private Data Columns to Spark: Compute & Transport

Summary: Snapshot copying for private data columns was the last component in our platform system that was not warehouse agnostic. I migrated this code path to Spark while improving performance and simplifying execution.

Building a truly warehouse agnostic platform is a top business priority for Rox, with the majority of the system already having been migrated to Spark to facilitate this, prior to my internship. The primary exception to this was a system responsible for computing and copying snapshots of “private data columns” values into Rox’s PostgreSQL database for quick access. This system allows customers to configure new data for Rox dashboards and bring new custom fields into the Rox system for agents, without having to access the warehouse directly. I was tasked with this migration, eliminating the last usages of Snowpark (our former, Snowflake-native compute layer) in Rox.

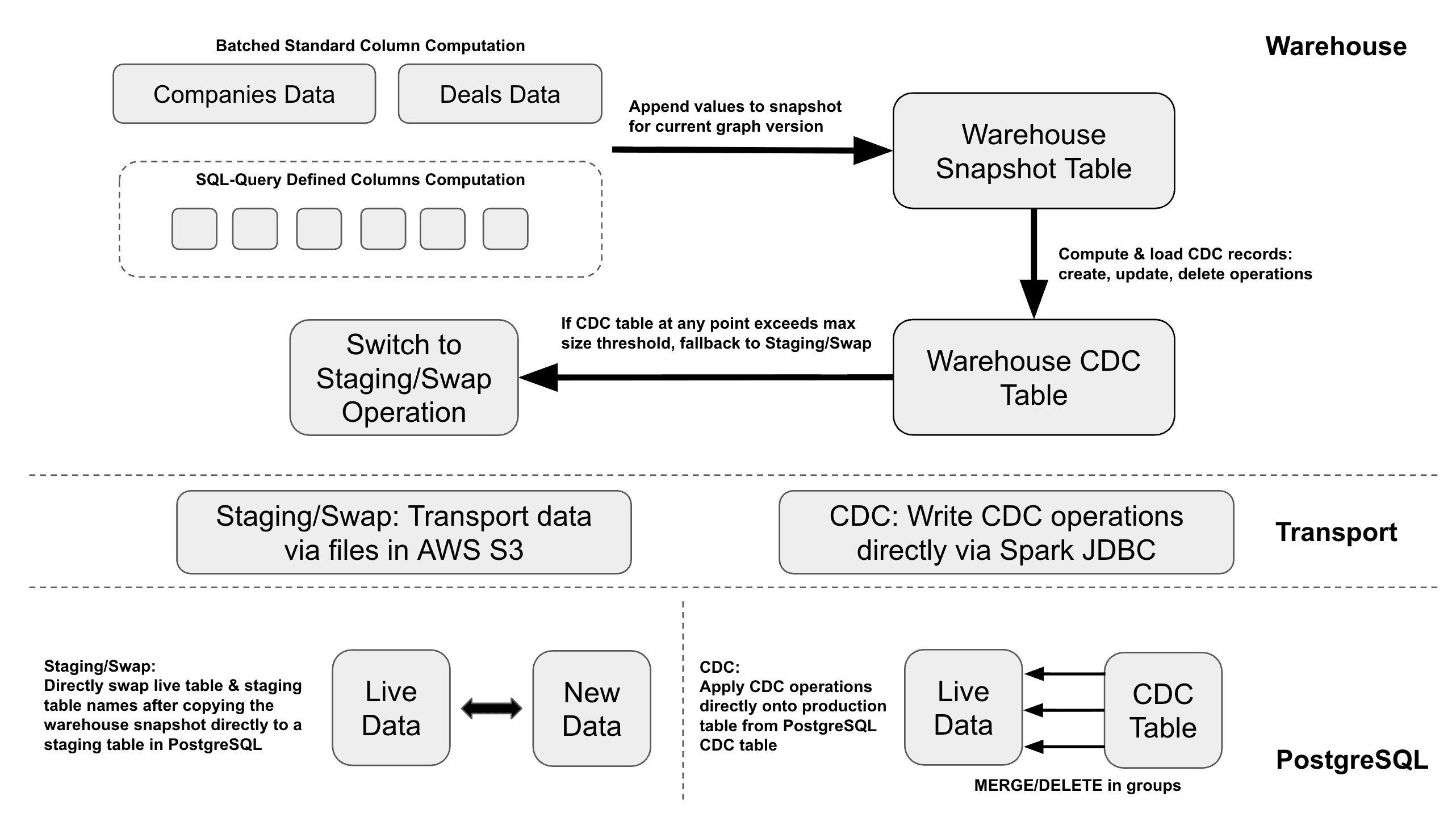

The first challenge in this process was wading through the existing codebase for computing and syncing private data column values, which included a dozen feature flags (fun!), and subsequently determining what elements were necessary to port over to the new system. I then began work on rearchitecting the system, with guidance from my colleagues. This required redesigning both the compute itself and the snapshot transport to our PostgreSQL database. Similar to the Rox knowledge graph, we compute private data column values in the organization’s warehouse before transferring snapshots into PostgreSQL. The data columns code formerly fanned-out computation and transfer to many worker tasks, each assigned a separate group of columns. We decided to eliminate this fan-out approach in favor of a single job due to non-trivial setup time in Databricks jobs due to dependency installation, the opportunity to merge snapshot generation queries, and the ability to perform a single CDC (Change Data Capture) computation for updates. CDC is an operation that determines which records require INSERT, UPDATE, or DELETE actions by comparing new and old data on a specified join key.

My code ultimately merged what was formerly many warehouse data “snapshot” tables (proportional to the number of columns) into a single snapshot table for all of an organization’s private data columns. This unlocked the ability to perform a single CDC computation, leveraging Spark parallelism to achieve far greater performance. This change, along with several others, resulted in the new system achieving substantial wall-clock performance gains (varies widely by org) over the fan-out approach.

After CDC computation, I leveraged Spark JDBC to write a new CDC table to PostgreSQL directly and then apply the CDC diffs via MERGE statements with branching on size, to avoid timeouts. If the CDC table in the warehouse exceeds a given size threshold in the warehouse, we preemptively terminate the process and elect to perform a staging & swap operation instead. This requires writing to S3 via Spark and then batch copying the generated S3 files into a clean staging table in PostgreSQL (identical to the production table). We then simply swap table names to complete the operation in this case. Due to the complexity of the code path itself (the details of which I will spare the reader!), this project ended up being quite involved. Despite that, I really enjoyed working with Spark, attempting to optimize this system at scale, and seeing some of my deep platform work drive real customer value.

Before:

Snowpark compute, Snowflake-native; cannot access other warehouses

Fan-out jobs per column group with independent snapshot append & CDC computations

Many snapshot tables (hundreds for some organizations)

Cannot run locally reliably due to transfer issues

After:

Warehouse agnostic using single Spark job with substantially reduced wall-clock time

Unified snapshot table and single CDC pass, leveraging parallelism

Can run locally with clean data transfer setup

12 feature flags --> 0 feature flags (massive difference in code quality, readability)

Unified Warehouse Layer Abstraction

Summary: I built a unified abstraction layer over all supported warehouses to allow for easily adding new warehouses in the future.

I built a new, unified warehouse connection abstraction layer for Rox's platform, which my work on rearchitecting private data columns made possible. Previously, each code path that queried a warehouse needed to branch on storage system type. This implementation replaced that scattered branching with a single `WarehouseConnector` interface and a factory that resolves the correct connection type per organization. This makes adding new warehouse backends in the future much easier.

The primary component of this project was implementing a generic concurrent session pool, parameterized by session type, that manages session checkout, validation, creation, and return across threads. Correctness was critical here, with the pool leveraging a semaphore for capacity bounding, a thread-safe queue for idle sessions, and a cooperative shutdown event, with a three-phase checkout process that drains invalid sessions, attempts creation, then poll-waits with periodic retry. A background daemon thread handles idle session cleanup. I validated these invariants with concurrency stress tests, including high-contention scenarios.

Rox Agent Workflows (Agents Work)

During my Rox internship, I was a core member of the team building Rox Agent Workflows, preparing for our launch. Agent workflows are one of the most anticipated new products in Rox for 2026 and are a critical step toward the overall goal of transitioning Rox from a passive tool, capable of running agents on command, to an active engine, running background agents at scale to automate work.

Rox workflows run on Temporal’s workflow execution engine, engineered for running durable, reliable, long-running tasks inside of a distributed execution environment. My work for Rox workflows included leading the development of control flow, the workflows debugger, transitioning the workflows compiler to support recursive structure, and more.

What are Rox Agent Workflows?

Rox Agent Workflows is a framework for configuring deterministic processes, powered by Rox Agents. You can think of Rox Workflows as programs, with the process of configuring and testing a Rox workflow nearly identical to the process of programming. In order to build a Rox workflow, users must select a sequence of “action blocks.” These blocks each have a defined set of typed inputs and outputs and are generally meant to be “atomic” actions. For example, enriching a lead, generating an email, or sending a notification are all examples of simple action blocks in workflows. On the backend, we define each action block as a new Temporal activity via our package registry.

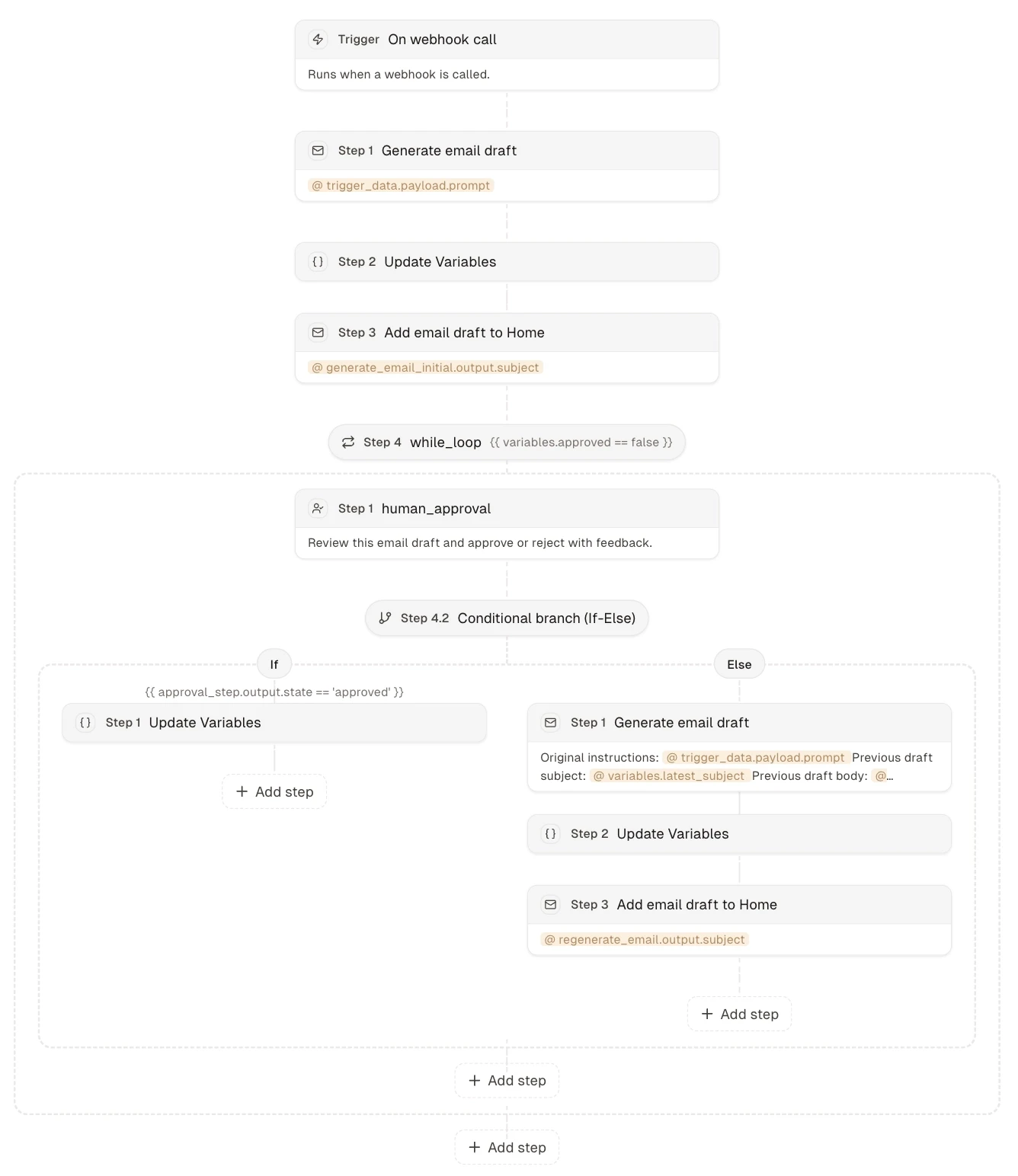

This is an example Rox workflow, defined with a “trigger” (i.e. the event that launches execution) and a set of “steps” or the sequence of action blocks and control flow that manage the execution.

Rox Workflows Static Compiler

Summary: I extended the Workflows static compiler to handle recursive control flow and more.

The ability for action blocks to produce outputs and downstream blocks to receive inputs generated by previous blocks is critical for the vast majority of common workflows use cases. But how can we pass data to workflows blocks at workflow definition time when that data hasn’t been computed yet? We leverage Jinja, a Python templating engine, to allow users to define this data flow. Data generated by past blocks can be accessed via static data “references” using Jinja. At runtime, we resolve Jinja templates into real values using these references. As a simple example, imagine that you have a two step workflow, StepA --> StepB, where StepA produces value. Then, when you define your StepB input, you can simply reference {{ StepA.value }}. Prior to StepB running, this reference will be converted into the actual value produced by StepA by fetching it from the workflows run log or append-only context.

There is one problem with this approach. How do we know that StepA.value matches the required input type for StepB? Unlike many other workflow tools on the market, Rox workflows include static “compilation” or validation on all input data and variable references in a workflow definition to ensure that types are correct. Workflows that do not pass validation cannot be run, similar to how a compiler for statically typed programming languages can catch mistakes before runtime entirely! This makes Rox workflows far more reliable, eliminating an entire class of runtime errors due to typing bugs for our users, prior to causing issues in production. As part of my work, I extended the Workflows compiler to support recursive control flow and perform deeper resolution and compilation on input data.

Control Flow in Rox Workflows

Summary: I built control flow in Workflows, including loops, conditionals, & waiting for approval.

Loops & Conditionals: I built standard control flow logic expected from a programming language inside Rox workflows, including for-each loops (iterating over a set of values), while loops (iterating until some condition is met), and conditional branching (supporting n-branch switch statement logic). Control flow can be nested recursively and is implemented at the workflow level in Temporal rather than within an activity.

Variables: Rox workflows enforce scope rigorously and workflow action block outputs are immutable. This is valuable for reliability but many common workflow use cases, including the use of any while loops, are impossible to implement without mutable state in workflows. I introduced statically typed “variables” or mutable state to Rox workflows, which can only be updated through special “Update Variables” blocks.

Human-in-the-Loop Approval: Forcing workflows to pause and wait for your approval can be very important, especially for workflows performing critical actions. I implemented human-in-the-loop approval for workflows by creating a new “Human Approval” workflows block that pauses workflow execution until receiving a Temporal signal sent by a user-facing endpoint. Waiting Temporal workflows are given timeouts and consume very little system resources.

Workflows Debugger System

Summary: I built a debugger for Workflows to help users gain confidence that their workflows are working properly and to debug them quickly and accurately when failures occur.

Setting up Rox Agent Workflows, even with the help of the Agent Builder, can be challenging. It is extremely important that customers are able to verify that their workflows are configured correctly prior to deploying live in production. We’ve previously alluded to the notion that building Rox workflows is almost identical to programming! Workflow blocks effectively function as lines of code and data flows through workflows just like variables in programming languages. With this in mind, we wanted to give users the ability to “debug” workflows in the same way that software engineers can debug code.

I built the Rox workflows debugger, which supports full step-through debugging of Rox workflows, including breakpoints, pausing, cancellation, etc. We use sse-starlette for SSE or server-sent events endpoints (HTTP endpoints that hold open a long-lived connection for the server to push a stream of events to the client without the client having to poll). This enables a seamless debugging experience where the user can actively monitor workflow status at the individual block level. After all, debugging workflows is difficult without visibility, just like code. On the backend, I leveraged Temporal signals to communicate with workflows on when to step, cancel, pause, etc., while breakpoints must be specified at the start of a test run and will automatically trigger a pause when set. Using the debugger, users can see exactly what data is passed into each action block before execution and quickly determine the root cause of errors or undesired results during test execution.

With the workflows compiler, debugger, and builder, we’ve effectively built a whole LLM-integrated IDE for Rox workflows!

Artifact Revision — Search/Replace & Compound Blocks

Summary: Search & replace works very well for AI agents to revise generated artifacts. We can improve out-of-the-box performance of search & replace by increasing variability in artifact source data, along with strategies like whitespace normalization, and building retries.

Rox products including Workflows and Command are able to generate “artifacts”, including emails, webpages, and slide decks. This is one of the most used features in the entire Rox product! Rox previously did not support revision capabilities in these artifacts, however, and so artifacts would have to be completely regenerated for every single requested change. Since artifact generation is a key use case for workflows, revision capability was a clear necessity.

I first researched best methods for revising artifacts with LLMs and quickly determined that search/replace had been repeatedly confirmed to outperform other methods including unified diffs, for this type of task. As such, I built search & replace revisions in AI-generated artifacts for workflows, which worked quite well almost immediately. Since search/replace must be 1:1 to prevent unintended consequences, however, I modified original generation to ensure that agents add unique IDs to repetitive data formats like HTML to allow for finding much smaller unique search diffs in artifacts. This improves reliability, latency, and cost, reducing output tokens.

Using my revision work, I was able to build special “compound” blocks in Rox workflows for drafting artifacts with review. These compound blocks leverage while loops, human approval, branching, generation, and revision, all inside one single user-facing action block. This allows users to configure complex revision logic inside workflows in a single step and opens the door to creating many more such compound blocks as we monitor the most common workflows use cases in production.

Preparing for Launch Day

The process of preparing for product launch was exciting in its own right, reviewing the product and code for possible bugs, potential optimizations, suboptimal user experiences, and security/abuse issues. I was able to tackle a few important issues during this final launch preparation phase that taught me a lot about what it takes to bring a product from 90% ready to 100% ready, often disproportionately difficult. Below is a summary of a few of the more interesting items that I was able to tackle before launch.

For-Loop Parallelism: Looping over large sets of items like accounts or deals and performing actions for each item represents a very common and powerful use case for Rox workflows. For-loops in workflows were originally implemented completely sequentially, despite most common use cases being “embarrassingly parallel”. Since statically compiled Rox workflows strictly enforce scope, we’re able to extract all references in loop sub-blocks and use this reference set to calculate whether a particular for-loop is parallelizable. If a for-loop is parallelizable (which we define as having no dependencies on past iterations and no possible preemptive termination), we are able to spin up concurrent child workflows using Temporal to complete the job in parallel across N workers, massively reducing time.

Fairness: Our infrastructure concurrency is obviously bounded, employing a finite number of worker containers, each of which are configured to run a finite number of Temporal workflows concurrently. Temporal workflows wait in a task queue prior to being picked up by workers, so scaling works naturally, but certain issues still arise. For example, imagine that Customer #1 decides to spin up 100 workflows in parallel at the same exact time, either triggered by a background task or via a burst of webhook calls. Immediately afterwards, Customer #2 launches one small workflow. Is it fair for Customer #2 to have to wait for ALL of Customer #1’s workflows to run prior to getting to run its lone workflow? Of course not! In order to address this problem, I introduced the use of orgId Temporal fairness keys, configuring Temporal to automatically cycle through (via round-robin dispatch) customers’ workflows, ensuring fairness when concurrency limits in the system are reached.

Concurrency Provisioning (Future Project): Fairness alone is not sufficient for complete protection from system abuse. Org-level workflow concurrency limits (i.e. a constraint to indicate that Customer #1 can only run N workflows concurrently) may also be necessary in the future and would be set depending on customer size (i.e. enterprise customers should have higher provisions than self-serve users). We’ve designed concurrency limits for workflows but will only pursue implementation when necessary. The system will employ one concurrency manager long-running workflow per organization, behaving as a semaphore. When a workflow wants to start, it must send a request to the manager and either receives a confirmation to begin or must wait for a signal. When a workflow completes, it notifies the manager, which frees up a slot and signals to the next awaiting workflow that it can begin. This is a natural use case for Temporal signals, making the implementation fairly simple.

Security: In preparation for launch, I also worked on patching potential security vulnerabilities in workflows, including routing HTTP block requests through our Bright Data proxy as part of the effort to limit Server-Side Request Forgery (SSRF) risks and introducing stricter controls on Jinja template usage for variable resolution to prevent template injection attacks. Security work like this was very fun and new to think about for me!

Conclusion

We are only just scratching the surface of the interesting engineering challenges required to build reliable, scalable agent systems. Numerous exciting engineering challenges are on the immediate horizon at Rox, including transitioning to a streaming knowledge graph and extending workflows beyond a primarily Rox-native system. I can’t wait to see where this work goes in the future! Thank you so much to the team, especially Damon, Taeuk, and Santhosh, for making my internship experience so wonderful!

Similar Articles

We build with the best to make sure we exceed the highest standards and deliver real value.